The FooSIN project, in collaboration with the ANR project D2KAB project, organised the 3rd AgroHackathon on August 29 and 30 at the Institut Agro Montpellier.

The event was supported by INRAE, DigitAg and Labex NUMEV.

It gathered 27 participants: 6 coaches and 11 teams in the competition.

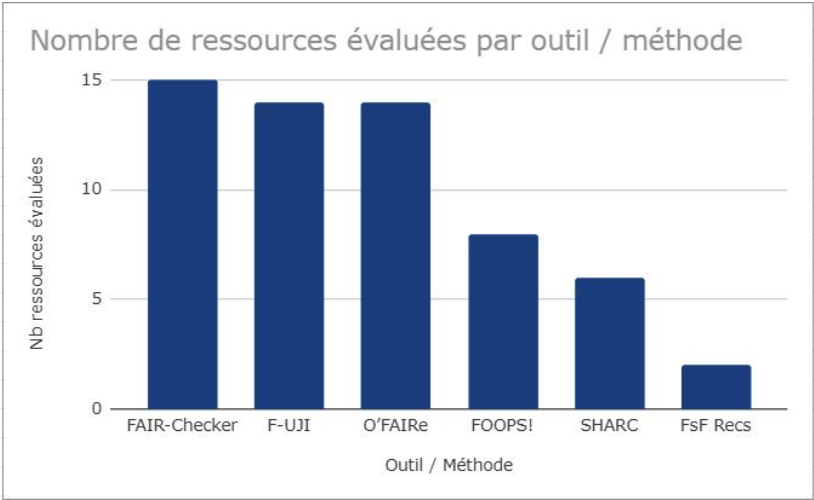

The twenty or so participants were able to test 6 tools and methods for assessing the level of FAIRness (or compliance with the FAIR principles) on the resources of their choice. Accompanied by 6 coachs directly involved in the development of tools and methods, the participants calculated a first score. With the help of the coaches, they then reworked the description of the resources, in particular the metadata, in order to improve this score. The final classification dedicated the teams which obtained the highest differential between the initial score and the final score.

The participants had at their disposal:

- 4 automatic tools, including:

- 2 general practitioners: FAIR-checker and F-UJI

- 2 dedicated to semantic resources: O'FAIRe and FOOPS!

- 2 self-assessment questionnaires/methods: SHARC and the FAIRsFAIR Recommendations

Nearly 20 resources (datasets, ontologies and thesaurus) were screened for tools and questionnaires. All teams increased the FAIRness scores obtained during the AgroHackathon with an average increase of around 10% (once the metrics are normalized).

All of the feedback was very positive: for the coaches, confronted with very varied real cases brought by users on-site; for the competitors, who have all become more familiar with the tools and more aware of the FAIR principles.

Among the impressions and comments at the end of the hackathon, we can retain:

- The importance of identifiers used to be clear about what is given as input to a tool (descriptive web page, URI, DOI).

- A great need for metadata harmonization (which expected properties and values, which recommended vocabularies?).

- The importance of clearly detailing the indicators/criteria evaluated and how to intervene on this evaluation by changing the resource.

- The difference between tools/methods that exploit (or not) the content of resources and not just their metadata.

- The need to illustrate more with examples (in tool interfaces).

- The need to think in the interfaces about the new use case created by the tools: hosting - evaluating - correcting and so on.

- The proposal is to harmonize the results from one tool to another.

This event, supported by the FooSIN project, was a "pilot" and we plan to reproduce this type of "FAIRness hackathon" competition in different forms, with other FAIRness assessment tools and of course beyond the agri-food theme.

The context of the Horizon Europe FAIR-IMPACT project, which focuses on the adoption of FAIR principles in EOSC, will be ideal for replicating this type of event on another scale!